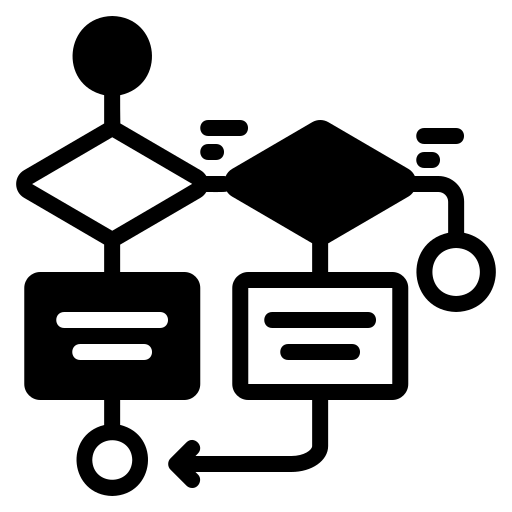

Referents + Perception = Understanding.

Logic vs. Statistic

Large-Language Models (LLMs) are based on statistics. Using basically all of humanity’s written words as a reference, given a particular prompt and what you’ve said so far, guess what the next word (or character) in the response will be. The results are the familiar sterile-yet-polite tone and word choice that defines ChatGPT, Bard, and the others. Humans on the other hand, in conversation, do not use statistical reasoning to guess the next word in our sentence. Instead, we use an amalgamation of referents, grammatical and syntax1 rules, the logic our perceptions follow, and our own reasoning to choose our words. Sometimes carefully; others not.

But these things do not exist in pure statistics. We’re taught an elementary version of this in any Stats 101 class: correlation does not imply causation. In other words, just because things are linked statistically does not mean there’s a reason for it. Statistical models also contain room for error. The logic most people are familiar with does not.

The Logic WE use

Deductive

In deductive reasoning (which is what formal logic falls under), we begin with things that are obviously true. For example, the statement “a point is that which has no dimension” is true2. As is the statement “three lines define a plane”3. By combining these statements, which we know are always true, we arrive at new statements. We then know that these statements are always true. There’s no room for error in deductive systems, which is why deductive logic has power. However, this power rests upon the fact that our initial statements are always true. If a single one of those statements is not necessarily true, then any statement(s) built atop it come into question, too.

Inductive

Inductive reasoning is another form of logic that humans use. It is the logic of science. We begin with observations about the world and from these observations attempt to create a conclusion. But oftentimes we’re don’t see the whole picture. To the casual observer, it may appear that the sun is moving through our sky in a way that suggests it goes around the Earth everyday. While our reasoning may seem convincing on the surface, it is not reality. There are countless other examples from science of new discoveries radically changing our understanding of things. These discoveries force science to adjusts theories accordingly, and that’s a good thing. Changing our views based on new information is healthy.

These two forms of logic are mostly concerned with how we derive conclusions. It’s the process that is important to logic. Large Language Models do not have a thought process. This is what allows them to respond instantly. They do not think. The answer to any prompt under any context already has (theoretically) the answer mapped to it. It’s just a matter of substitution and calculation in the same way that a calculator does not necessarily “think”.

The Doors of Perception

The main difference between ChatGPT, Bard, Llama, Bing, etc. and humanity is perception. Our 5 senses give us referents to understanding the world around us. There is no such thing for AI. They do not see, hear, feel, taste, or smell. They have nothing concrete to reference. So, when presented with an entirely novel situation, they’re likely to get it wrong4. The senses draw us out of the abstract world of pure thought and into the real world in which we live.

It’s through this shared, common experience of perception that we can establish things like intention. That’s because intentions exist outside of the language we use. We can certainly communicate our intentions and make them explicit using language, but this is rarely done at the time of communication. Instead, we are meant to infer the intention using contextual clues outside of language. Since large-language models have only the tool of language to work with, it is impossible to infer intention when prompting it. This inability to determine intention is what gives rise to the evil AI trope: HAL-9000, GLaDOS, Mittens from Chess.com, Skynet.

Our senses provide us with referents that ultimately ground us. They also provide us with the data we use to reason. And it’s this combination of referents about the world we live in, plus the process by which we reason about this world that gives us our sentience.

Leave a Reply

You must be logged in to post a comment.